AI: Racist Without Preconception or Bigotry

Article By : David Benjamin

Because AI is so passively absorptive of the data fed into it, it cannot help but reflect the biases — conscious or oblivious — lodged in the hearts and minds of its human developers.

“I don’t understand why we’re spending all this money on artificial intelligence when we can get the real thing for free”

— John M. Ketteringham

The current artificial intelligence (AI) algorithms for facial recognition appear to have the scientific rigor and social sensibility of a Victorian eugenics quack. However, this judgment would not do justice to the army of eugenics quacks who operated (often literally, tying tubes and performing lobotomies) from the 1890s well into the 1930s, because they were driven by a deep-seated racist bigotry.

The National Institute of Standards and Technology recently completed a massive study, finding that state-of-the-art facial-recognition systems falsely identified African-American and Asian faces ten to a hundred times more than Caucasian faces. “The technology,” noted the New York Times, “also had more difficulty identifying women than men.” It proved uniquely hostile to senior citizens, falsely fingering older folks up to ten times more than middle-aged adults.

Up against the wall, Grandma!

An earlier study at the Massachusetts Institute of Technology found that facial recognition software marketed by Amazon misidentified darker-skinned women as men 31 percent of the time. It thought Michelle Obama was a guy.

(Since then, Amazon — along with Apple, Facebook and Google — are refusing to let anyone test their facial-recognition technologies.)

Unlike the eugenicists and phrenologists of old, facial recognition algorithms are racist without preconception or bigotry. A closer analogy would be to compare them to German concentration-camp guards, who — when tried for war crimes — advanced the argument that they were not motivated by race hatred. They were simply, dutifully, mindlessly following orders. They had no opinion either way.

This analogy also fails because the guards were lying. Algorithms can’t lie. Algorithms don’t think; you can’t have a conversation with one. They have no opinions. In this respect, algorithms are inferior to dogs. I’ve had conversations — largely one-sided but strangely edifying — with dogs, and I’ve gotten my point across. Moreover, dogs have opinions. They like some people and dislike others, and their reasoning for these preferences is often evident.

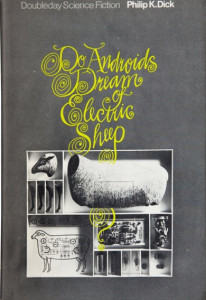

Algorithms, machines and robots might someday catch up with Rin Tin Tin and form viewpoints. But, as sci-fi prophets Arthur C. Clarke and Philip K. Dick have intimated, this might not be a welcome breakthrough. If you recall, in the film Blade Runner, based on Dick’s novel, Do Androids Dream of Electric Sheep?, Harrison Ford makes little headway when he tries to persuade a couple of highly opinionated replicants named Roy and Pris that they ought to cheerfully acquiesce to their own destruction. Instead, they try to kill him.

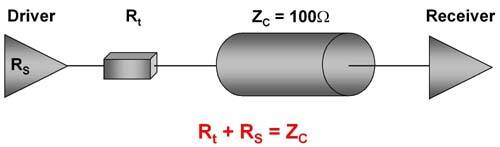

Luckily, our technology has yet to create artificial intelligentsia as balky as Roy, Pris, HAL 9000 (2001) and the devious Ash in Alien. A more accurate movie parallel — I think about these things — might be Joshua, the WOPR electronic brain in War Games. Joshua had enough power and speed to simulate a million H-bomb scenarios in a minute or so but could not be swayed, by reason and evidence, that the very concept of global thermonuclear war was futile and — at bottom — evil. Joshua only arrived at this purely pragmatic conclusion after processing several yottabytes of data and blowing every fuse at NORAD.

Data, not persuasion

Data, not persuasion, convinced Joshua not to end the world. Conversely and perversely, there are intelligent humans who could not be similarly convinced, regardless of the volume of data provided. Some zealots today cling implacably to the belief that a nuclear war can be won, and that several billion human lives is a tolerable price to pay for that sweet victory.

This sort of wrongheaded but thoroughly human opinion is unattainable in any foreseeable iteration of algorithms. It will be undesirable when it becomes possible. And it will be easier to accomplish with nuclear holocaust than with facial recognition.

The human face, which appears in infinite variety alterable in a thousand ways, changes constantly through the stages of life. It is vastly more various than the face of war. There is no extant algorithm that can master all its nuance, expression and mutability, nor does that magic formula seem imminent. In the meantime, facial recognition technology will only be as objective and accurate as the humans who are creating it. This means that facial AI today is both as racist as the Ku Klux Klan and as innocent of racism as a newborn baby. And we don’t know when it will be which.

Because AI is so impenetrably neutral, so passively absorptive of the data fed into it, it cannot help but reflect the biases — conscious or oblivious — lodged in the hearts and minds of its human developers. It will have opinions without knowing how to form an opinion. The consequences are both ominous and familiar.

Throughout human history, bigots have been influencing – often dictating — public life, calling too many shots and poisoning society. Now, naively, we have well-meaning technologists creating surveillance devices that can’t tell girls from boys, Asian grandmothers from crash-test dummies, or criminals from saints.

The unaccountable purveyors of these astigmatic robo-spies — namely, Amazon, Apple, Facebook and Google — are putting them into the hands of agencies who have the power to assemble dossiers, issue subpoenas, kick open doors, deny bail, put people in jail, and throw children out of the country.

It’s been argued that studies evaluating facial-recognition AI are only flawed because the samples have been too small. But the National Institute of Standards and Technology study was huge. It tested 189 facial-recognition algorithms from 99 developers. And it found, like every previous study, that features as basic as five o’clock shadow, comb-overs, skin tone and makeup tend to befuddle the algorithm.

Police and prosecutors are coming around to the realization that eyewitness identification is an unreliable tool for solving crimes, because it is fraught with haste, myopia, fear, prejudice and a host of other human flaws.

We’re learning now that the algorithms devised to digitally correct this sort of mortal error are as prone to the same emotions, bigotry and malice as any bumbling eyewitness. This is true because we can’t help but make our machines in our own malleable and elusive image.

Subscribe to Newsletter

Test Qr code text s ss